PUBLISHER: Global Market Insights Inc. | PRODUCT CODE: 1959313

PUBLISHER: Global Market Insights Inc. | PRODUCT CODE: 1959313

AI Accelerator Chips Market Opportunity, Growth Drivers, Industry Trend Analysis, and Forecast 2026 - 2035

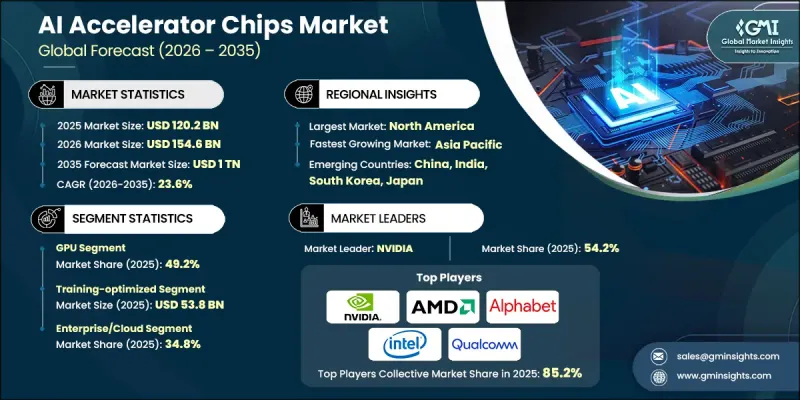

The Global AI Accelerator Chips Market was valued at USD 120.2 billion in 2025 and is estimated to grow at a CAGR of 23.6% to reach USD 1 trillion by 2035.

Market expansion is fueled by escalating hyperscale infrastructure investments, rising demand for high-performance inference acceleration in data centers, and the rapid commercialization of generative AI applications across enterprises. Organizations are increasingly deploying AI workloads across cloud-native, hybrid, and on-premise environments, requiring purpose-built silicon capable of delivering higher throughput, lower latency, and improved energy efficiency. Simultaneously, the proliferation of edge AI use cases is intensifying the need for compact, power-efficient accelerators that enable real-time processing closer to the data source. As model architectures evolve and computational complexity rises, enterprises are prioritizing scalable hardware solutions optimized for both training and inference tasks. The growing reliance on AI-driven automation, predictive analytics, and intelligent decision systems across industries continues to reinforce demand for specialized accelerator chips, positioning the market for sustained high-growth momentum through 2035.

| Market Scope | |

|---|---|

| Start Year | 2025 |

| Forecast Year | 2026-2035 |

| Start Value | $120.2 Billion |

| Forecast Value | $1 Trillion |

| CAGR | 23.6% |

A major growth catalyst for the AI accelerator chips market is the rising investment by hyperscale cloud providers in inference-optimized silicon designed to manage large-scale AI service delivery. As generative AI platforms expand globally, providers are under pressure to balance operational cost, computational performance, and latency. This has intensified the shift toward custom-designed accelerators tailored specifically for AI inference workloads. At the same time, governments across multiple regions are investing substantial funding in their domestic semiconductor ecosystems to strengthen technological sovereignty and accelerate AI chip innovation. The market has also witnessed a strategic pivot from general-purpose processing architectures toward workload-specific accelerator designs. Since the early 2020s, advancements in model architectures have highlighted performance and efficiency limitations in conventional GPU-based systems, prompting a transition to more specialized silicon. This evolution is expected to continue through 2030 as AI models increase in size and complexity, driving improvements in performance-per-watt efficiency and reshaping competition across both hardware and software co-design ecosystems.

In 2025, the GPU segment accounted for 49.2% share. GPUs continue to dominate due to their adaptability in handling diverse AI workloads, including large-scale training, inference, and mixed operational models across hyperscale data centers and enterprise AI platforms. Their mature software ecosystems, compatibility with widely adopted AI development frameworks, and seamless integration within existing computing infrastructure contribute significantly to their sustained market leadership. Continuous architectural enhancements and expanded developer toolchains further strengthen the competitive edge of GPUs in AI deployments at scale.

The training-optimized segment generated USD 53.8 billion in 2025, supported by ongoing investments in large model development and foundational AI research initiatives. Hyperscalers, research institutions, and enterprises are allocating substantial capital toward building increasingly complex models that require immense computational density, high-speed interconnectivity, and expanded memory bandwidth. Training-focused accelerators are engineered to support distributed computing environments and large dataset processing, enabling faster convergence times and improved scalability for advanced AI applications.

North America AI Accelerator Chips Market captured 39.8% share in 2025, reflecting strong regional leadership in AI infrastructure deployment. Growth across the region is driven by large-scale data center expansion, integration of accelerators into enterprise IT ecosystems, and increasing AI adoption within telecom and cloud environments. Both inference-optimized and training-optimized solutions are being deployed extensively to support generative AI services, real-time analytics, and advanced automation systems. The region's robust technology ecosystem, venture capital activity, and research-driven innovation further solidify its position as a key growth hub within the global AI accelerator chips industry.

Key companies operating in the Global AI Accelerator Chips Market include NVIDIA, AMD (Advanced Micro Devices), Intel, Qualcomm, Apple, Huawei, Google (Alphabet), Graphcore, Cerebras Systems, SambaNova Systems, Groq, Tenstorrent, Cambricon Technologies, Mythic AI, Enflame Technology, Etched.ai, Iluvatar CoreX, and MetaX Integrated Circuits. These industry participants compete through architectural innovation, proprietary software ecosystems, vertical integration strategies, and strategic partnerships aimed at capturing expanding demand across cloud, enterprise, and edge AI segments. Companies in the AI Accelerator Chips Market are strengthening their competitive positions through aggressive investment in research and development, focusing on workload-specific chip architectures and energy-efficient designs. Strategic collaborations with hyperscalers, cloud providers, and enterprise customers enable co-development of customized silicon tailored to targeted AI applications. Many firms are building vertically integrated ecosystems that combine hardware, software frameworks, and developer tools to enhance customer retention and platform stickiness. Geographic expansion and domestic manufacturing initiatives are also prioritized to mitigate supply chain risks and align with government semiconductor policies.

Table of Contents

Chapter 1 Methodology and Scope

- 1.1 Market scope and definition

- 1.2 Research design

- 1.2.1 Research approach

- 1.2.2 Data collection methods

- 1.3 Data mining sources

- 1.3.1 Global

- 1.3.2 Regional/Country

- 1.4 Base estimates and calculations

- 1.4.1 Base year calculation

- 1.4.2 Key trends for market estimation

- 1.5 Primary research and validation

- 1.5.1 Primary sources

- 1.6 Forecast model

- 1.7 Research assumptions and limitations

Chapter 2 Executive Summary

- 2.1 Industry 360° synopsis, 2022 - 2035

- 2.2 Key market trends

- 2.2.1 Technology type trends

- 2.2.2 Workload type trends

- 2.2.3 End-use industry trends

- 2.2.4 Regional trends

- 2.3 TAM Analysis, 2026-2035

- 2.4 CXO perspectives: Strategic imperatives

Chapter 3 Industry Insights

- 3.1 Industry ecosystem analysis

- 3.1.1 Supplier Landscape

- 3.1.2 Profit Margin

- 3.1.3 Cost structure

- 3.1.4 Value addition at each stage

- 3.1.5 Factor affecting the value chain

- 3.1.6 Disruptions

- 3.2 Industry impact forces

- 3.2.1 Growth drivers

- 3.2.1.1 Hyperscaler demand for data-center AI inference acceleration

- 3.2.1.2 Expanding use of AI accelerators in telecom network optimization

- 3.2.1.3 Government investments in domestic AI semiconductor ecosystems

- 3.2.1.4 Growth of edge AI applications requiring low-latency processing

- 3.2.1.5 Rapid deployment of generative AI workloads across enterprises

- 3.2.2 Industry pitfalls and challenges

- 3.2.2.1 High development costs and long chip design cycles

- 3.2.2.2 Supply chain dependence on advanced foundry nodes

- 3.2.3 Market opportunities

- 3.2.3.1 Custom AI accelerators for industry-specific workloads

- 3.2.3.2 Edge AI accelerator adoption in industrial automation

- 3.2.1 Growth drivers

- 3.3 Growth potential analysis

- 3.4 Regulatory landscape

- 3.4.1 North America

- 3.4.2 Europe

- 3.4.3 Asia Pacific

- 3.4.4 Latin America

- 3.4.5 Middle East & Africa

- 3.5 Porter’s analysis

- 3.6 PESTEL analysis

- 3.7 Technology and Innovation landscape

- 3.7.1 Current technological trends

- 3.7.2 Emerging technologies

- 3.8 Emerging Business Models

- 3.9 Compliance Requirements

Chapter 4 Competitive Landscape, 2025

- 4.1 Introduction

- 4.2 Company market share analysis

- 4.2.1 By region

- 4.2.1.1 North America

- 4.2.1.2 Europe

- 4.2.1.3 Asia Pacific

- 4.2.1.4 Latin America

- 4.2.1.5 Middle East & Africa

- 4.2.2 Market concentration analysis

- 4.2.1 By region

- 4.3 Competitive benchmarking of key players

- 4.3.1 Financial performance comparison

- 4.3.1.1 Revenue

- 4.3.1.2 Profit margin

- 4.3.1.3 R&D

- 4.3.2 Product portfolio comparison

- 4.3.2.1 Product range breadth

- 4.3.2.2 Technology

- 4.3.2.3 Innovation

- 4.3.3 Geographic presence comparison

- 4.3.3.1 Global footprint analysis

- 4.3.3.2 Service network coverage

- 4.3.3.3 Market penetration by region

- 4.3.4 Competitive positioning matrix

- 4.3.4.1 Leaders

- 4.3.4.2 Challengers

- 4.3.4.3 Followers

- 4.3.4.4 Niche players

- 4.3.5 Strategic outlook matrix

- 4.3.1 Financial performance comparison

- 4.4 Key developments

- 4.4.1 Mergers and acquisitions

- 4.4.2 Partnerships and collaborations

- 4.4.3 Technological advancements

- 4.4.4 Expansion and investment strategies

- 4.4.5 Digital transformation initiatives

- 4.5 Emerging/ startup competitors landscape

Chapter 5 Market Estimates and Forecast, By Technology Type, 2022 - 2035 (USD Million)

- 5.1 Key trends

- 5.2 NPU

- 5.3 GPU

- 5.4 ASIC

- 5.5 FPGA

- 5.6 Others

Chapter 6 Market Estimates and Forecast, By Workload Type, 2022 - 2035 (USD Million)

- 6.1 Key trends

- 6.2 Training-optimized

- 6.3 Inference-optimized

- 6.4 Hybrid

Chapter 7 Market Estimates and Forecast, By End-Use Industry, 2022 - 2035 (USD Million)

- 7.1 Key trends

- 7.2 Automotive

- 7.3 Consumer electronics

- 7.4 Telecommunications

- 7.5 Scientific/HPC

- 7.6 Enterprise/cloud

- 7.7 Others (financial services, industrial, retail, media, healthcare)

Chapter 8 Market Estimates and Forecast, By Region, 2022 - 2035 (USD Million)

- 8.1 Key trends

- 8.2 North America

- 8.2.1 U.S.

- 8.2.2 Canada

- 8.3 Europe

- 8.3.1 Germany

- 8.3.2 UK

- 8.3.3 France

- 8.3.4 Spain

- 8.3.5 Italy

- 8.3.6 Russia

- 8.4 Asia Pacific

- 8.4.1 China

- 8.4.2 India

- 8.4.3 Japan

- 8.4.4 Australia

- 8.4.5 South Korea

- 8.5 Latin America

- 8.5.1 Brazil

- 8.5.2 Mexico

- 8.5.3 Argentina

- 8.6 Middle East and Africa

- 8.6.1 South Africa

- 8.6.2 Saudi Arabia

- 8.6.3 UAE

Chapter 9 Company Profiles

- 9.1 Global Key Players

- 9.1.1 NVIDIA

- 9.1.2 AMD (Advanced Micro Devices)

- 9.1.3 Intel

- 9.1.4 Google (Alphabet)

- 9.1.5 Qualcomm

- 9.1.6 Apple

- 9.1.7 Huawei

- 9.2 Regional key players

- 9.2.1 North America

- 9.2.1.1 Cerebras Systems

- 9.2.1.2 Groq

- 9.2.1.3 SambaNova Systems

- 9.2.1.4 Tenstorrent

- 9.2.2 Asia Pacific

- 9.2.2.1 Cambricon Technologies

- 9.2.2.2 Enflame Technology

- 9.2.2.3 MetaX Integrated Circuits

- 9.2.2.4 Iluvatar CoreX

- 9.2.3 Europe

- 9.2.3.1 Graphcore

- 9.2.1 North America

- 9.3 Niche Players/Disruptors

- 9.3.1 Etched.ai

- 9.3.2 Mythic AI